(source: Electronics World, Mar. 1966)

By J. J. SURAN

Manager, Electronic Applications and Devices Laboratory General Electric Company, Syracuse, New York

[Mr. AUTHOR is responsible for technical and financial program planning, project organization, integration with product departments, and evaluation of laboratory effectiveness. Before joining G-E, he held engineering positions at J. W. Meaker Co. and Motorola. Between 1959 and 1963, he also served as a non-resident member of the MIT faculty. He is a Fellow of the IEEE, holds a BSEE degree from Columbia University and did graduate work at both Columbia and IIT. He is co-author of two books, co-author of 35 papers, and the holder of 18 patents in the field.]

A revolutionary functional design philosophy may soon obsolete discrete components in electronic circuits.

-------- Editor's Note: Recently ice attended a seminar sponsored by JFD Electronics Corp. on "The Future of Passive Components in Microelectronics." The first three speakers, Dr. John J. Bohrer, Vice-President, Research and Development, International Resistance Co.; Bruce R. Carlson, Treasurer and Director, Sprague Electric Company; and Jack Goodman, Vice-President, Components Division, JFD Electronics Corporation; covered resistive, capacitive, and inductive components and their role in our industry both now and in the immediate future. They agreed that the passive-component industry is presently strong and would continue to grow in the future. The final speaker summarized many of the points made by the previous speakers, but he also expressed a somewhat different view point. Because of the importance of his remarks, we are presenting pertinent excerpts here.

-----------

THE changes brought about by integrated-circuit technology or microcircuits or, as I prefer to call it, batch fabrication technology, are so profound and so subtle that the full impact of this new and changing electronic technology has still not been realized by many people in the field.

One of the most immediate and profound changes is the very definite alteration in trade-off considerations in circuit design. For example, in the days of "bliss"--and I will define--bliss" as before learning of integrated solid-state-the circuit designer had several simple rules to follow.

Rule 1 was that the most expensive and at the same time unreliable component was the active device. Therefore, if you designed a circuit and wanted it cheap and reliable, you reduced the number of active devices required to a minimum.

This is no longer true. As a matter of fact, the reverse is now true. This is due to batch fabrication of solid-state de vices. The transistor is now the cheapest and most reliable element in the arsenal of integrated or discrete electronic components.

The second rule was that the more complex a circuit looked, the more expensive it was likely to be; and the more discrete components in it, the more expensive and less re liable it was.

This has changed too. As a matter of fact, it is turning out that you really can't predict by looking at a complex circuit whether it is going to be more expensive or less expensive than a much simpler looking circuit or whether it will be more reliable or less reliable.

These are profound changes in circuit design. But there is another change involving the approach to circuit design from a systems point of view. We no longer ask ourselves, "What are the required characteristics of a specific circuit?" when we try to visualize a black box fitting into a system.

Instead, we ask, "What are the functional desires of the system's designer with regard to these black boxes?" In other words, we are taking a functional design approach to circuits rather than a terminal specification approach as was done in the days of "bliss." I know that there are many circuit designers who are still not doing this, and don't be too surprised if within a few years their circuits, unless they change their present design philosophy very soon, will be obsolete.

Capacitors

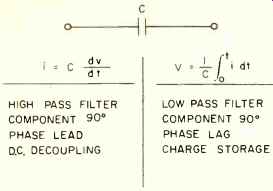

The functional properties of a capacitor are diagrammed in Fig. 1, and we might ask "What does a capacitor do?" It is quite possible that there is a planet in our universe that boasts a highly advanced civilization- an electronics civilization-where they have never heard of capacitors.

Chances are, however, they are probably using some component or some functional block to provide the capacitor function.

Basically, a capacitor is a differentiator if it is used as a series element or an integrator if it is used in a shunt mode.

It provides phase lead or phase lag in control systems, d.c. de-coupling, and storage charge. These are a capacitor's functions.

If we could find other ways of providing these same functions in electronic circuits, then it is obvious we would not need capacitors. We use capacitors for these jobs because capacitors are inexpensive. As long as manufacturers supply inexpensive and reliable capacitors we will undoubtedly use them. But as soon as there is a breakthrough in the batch fabrication approach which will make such functions avail able at lower cost, capacitor manufacturers will feel the pinch.

Now let me show you how we can replace capacitors in circuits by using the functional approach.

Fig. 1. Functional properties of capacitor.

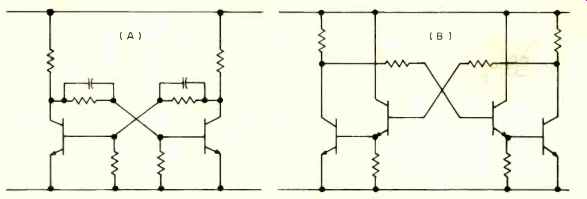

Fig. 2. How capacitors can be replaced by using functional approach. (A)

capacitively coupled flip-flop, and (B) a regenerative-boost flip-flop circuit.

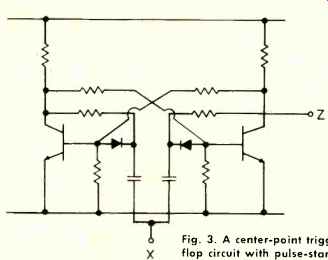

Fig. 3. A center-point triggered flip-flop circuit with pulse-starting

gate.

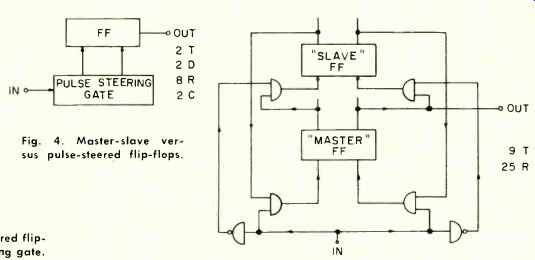

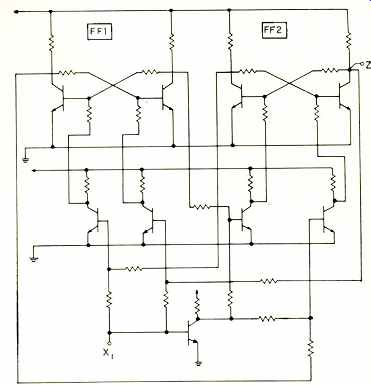

Fig. 4. Master-slave versus pulse-steered flip-flops.

Fig. 2A is a very simple example-a conventional flip-flop with conventional capacitors cross-coupling from collector to base of the flip-flop. If we ask ourselves, "Why were capacitors used?" we would have to answer that, basically, what the design engineer wanted was a certain function. The function wanted from the capacitors in that flip-flop was to give the regenerative action of the circuit a boost during the transition stage; in other words, when the flip-flop changes states, to short-circuit the resistors in order to get more regenerative gain.

As soon as an engineer uses that functional definition for these capacitors, he says, "Well, if all we need is more gain, why not put transistors in the cross-coupling loop?" It wasn't done in the days of "bliss" because transistors were more expensive than capacitors. But in these days of batch fabrication techniques, integrated circuits, and micro electronics, the transistor is cheaper than the capacitor. It is, then, quite obvious that we can make such a substitution (Fig. 2B). As a matter of fact, if you examine available integrated flip-flops, you will find that most of them have regenerative boosts being provided by transistors instead of capacitors, in the coupling networks.

Let us take another example where we have a much more difficult function to perform because, in this case, the capacitor does more than just provide a differentiating function. In Fig. 3 the capacitor also provides a delay. This is the well-known pulse-steering gate. This was the classical work-horse flip-flop in the days of "bliss." It is a very simple circuit and one that worked well over the years. It is to be found in all the textbooks.

These capacitors are difficult to replace because they provide three functions which are hard to duplicate by other means-although not impossible.

In these days of integrated circuits, they have been re placed by an awkward-looking circuit-one which often turns out to be less expensive than one using capacitors.

Let's see just what these capacitors do: first they serve a d.c. isolation function, isolating one part of the circuit from another. Second, they supply a differentiating function, that is, they allow energy to pass into the flip-flop only during the leading or trailing edge of the trigger pulse. Finally, they provide the logic delay or storage required to prevent a race condition.

The energy-storage function is the one most difficult to replace. Fig. 4 shows one of the classical ways of doing it, however. Engineers have known this configuration for quite a long time.

You use two flip-flops-one, a master flip-flop and the other a slave. This slave flip-flop is basically a storage circuit that provides the logic delay that capacitors could supply. If you try to implement this, you get a pretty complicated circuit.

Fig. 5. A center-point triggered master-slave flip-flop.

Fig. 5 shows an RTL (resistor transistor logic) realization of that scheme. Looking at this, you might say, "Is someone going to tell me this is a better circuit than the simple pulse steering-gate flip-flop?" In the days of "bliss," the answer was obviously "no." It was more expensive and much less reliable. Look at all the interconnections and look at all the components. But in the days of integrated circuits, the answer is "yes." It is a more feasible circuit, it is a more reliable circuit, and it is a more economical circuit because a transistor is much cheaper and much less area-consuming than a capacitor.

Resistors

Let us now turn our attention to resistors. There are a lot of unnecessary resistors in these circuits. All resistors that feed into the bases of transistors are performing isolation functions.

If transistors are cheaper than resistors, let's replace these resistors with transistors. If you do that you get the DCTL integrated flip-flop, first introduced by Fairchild, with 18 transistors and 8 resistors instead of the "bliss" circuit which had two transistors, two diodes, eight resistors, and two capacitors.

An economic evaluation ultimately boils down to whether two capacitors are worth 14 transistors or whether one capacitor is worth 7 transistors in an integrated structure.

With today's economic, integrated technology, the answer to that question, unreasonable as it may sound to some, is "yes," under many conditions.

Many manufacturers are selling these circuits cheaper than we can make the classic simpler circuit of discrete components. Furthermore, these circuits are much more reliable because their internal interconnections are much more re liable than the solder connection, the wire-wrap connection, or the welded connection.

We can now provide circuits of this complexity which approach, in reliability, the lifetime of a single transistor.

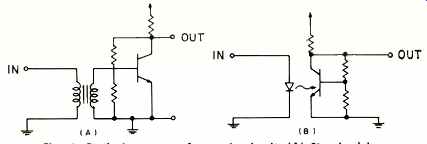

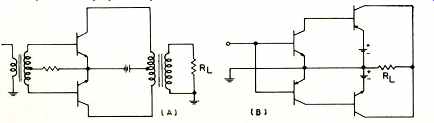

Fig. 6. Replacing a transformer in circuit. (A) Standard isolation transformer

hookup and CB/ opto-electronic isolation.

Fig. 7. Push-pull circuits. (A) Transformer-coupled, OM complementary symmetry

transistors, with both xformers omitted.

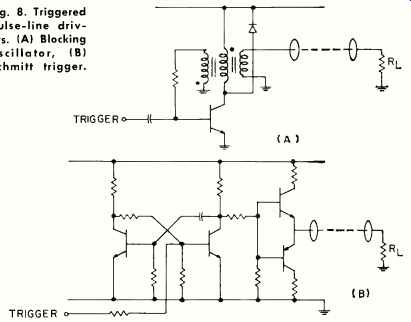

Fig. 8. Triggered pulse-line drivers. (A) Blocking oscillator, (B) Schmitt

trigger.

All of the data that I have seen shows that this trend is, in fact, a real trend; that is, you no longer can say that because Circuit A has 18 transistors and Circuit B has only 2 transistors, Circuit A is less reliable than Circuit B.

This is a fundamental and profound change in electronics.

It is leading us, at least in the laboratories, to make a fundamental and profound change in the way we design equipment. We no longer count active components. We no longer count components of any kind.

As a matter of fact, some of our systems look so fantastically complex, if you consider them from the components level, you would say that we, as researchers, are awfully idiotic to even dream up such systems and they will never work. But, hopefully, we are not idiotic-we are simply being foresighted.

The fact is that batch fabrication techniques-the ability to put down literally hundreds of thousands of components interconnected in systems or subsystems, is a profound, important, and completely different approach to electronic technology.

Incidentally, it all started with the transistor. There is nothing revolutionary about integrated circuits. It has been one processing step advance after another which has made this possible. And the advances in materials and processing are by no means slowing down.

Consider next the matter of high-value resistors for high speed computers. We are designing computer circuits now capable of operating at 200 MHz. The circuit delay times are on the order of one nanosecond, which is the time it takes light to travel about one foot. Such circuits can handle 200 million bits of information per second. While this is accomplished circuit work, there are some problems in their system use.

One of the things you have to do in the high-speed computer is provide a distributed electrical structure. In other words, you have to go to transmission line connections. This poses the problem of how to put a transmission line on an integrated substrate. This is a vital problem in high-speed computers, because of noise and reflection considerations.

In other words, the impedance levels of high-speed circuits can never really get beyond 100 ohms. Bill Piel of our Laboratory has calculated, for example, that if you wanted a 2000-ohm coaxial cable, the inner conductor would be the size of an electron and the outer sheath would be the size of the known universe. So, we will probably stick to an impedance of about 100 ohms.

It is interesting to note that the high-speed computer is one example of equipment which requires the size reduction integrated circuits offer because of speed-of-light considerations. However, we are not going to use nanowatt circuits which employ billion ohm or million ohm resistors because they are incompatible with the high speed that is required.

Transformers & Inductors

Before we stop with these examples, let's consider transformer and inductor problems. We have been charged with failing to do much about inductors or transformers because they can't be made in integrated circuit form.

This is true. On the other hand, we may not have to use transformers or inductors if we can provide equivalent functions by other means.

One of the main functions of the transformer in the simple circuit of Fig. 6A is isolation. We can provide even more isolation between input and output in the opto-electronic circuit shown in Fig. 6B.

It is true that the circuit of Fig. 6B is a less efficient way of providing isolation because as yet we haven't learned to make the opto-electronic circuit with high efficiency. On the other hand, active devices are so cheap that we can make up for losses in the circuit element by introducing more gain in the output. For example, we can use two transistors, in stead of one, thus making up much of the loss of the opto electric transfer process.

Transformers are also used for phase inversion. Fig. 7A is a conventional transistor circuit using transformers for de-coupling and phase inversion. By using complementary symmetry transistors (Fig. 7B) we can provide the same function without the use of the transformer.

This, incidentally, is why people are so interested in making p-n-p as well as n-p-n transistors in microelectronic or monolithic form. Because we are able to put down these elements on the same substrate, we can use complementary symmetry principles to do the job of transformers.

Many radios being produced today are using this kind of output to speakers instead of transformers.

Other functions of transformers include impedance matching and energy storage. Again, these functions are hard to duplicate by other means. One good example of how this might be done is demonstrated in Fig. 8, if we compare the blocking oscillator of Fig. 8A with the one shown in Fig. 8B. Both are classic circuits and both do the same thing, but one looks more complicated than the other.

Fig. 8A is a fairly simple circuit. The transformer is performing four functions: phase inversion, isolation, energy storage, and impedance matching.

In Fig. 8B we have had to separate the functions and, consequently, we are using more components, but the same functional job is being done by the circuit of (B) as by the circuit of (A). We have replaced the transformer, but we haven't eliminated the capacitor.

One of the reasons is that it is still more economical to use a capacitor in a pulse circuit to provide long time delays.

This becomes a film capacitor on the silicon substrate, part of the monolithic or integrated structure.

We can apply this same sort of design technique to linear circuits although we have heard that linear circuits are not yielding very well to integrated circuit technology.

I believe that the reason for this is that some of the problems are more difficult and also because an economic cross over is yet to be reached. Linear circuits of a standard type are not produced in mass quantities like digital circuits.

Although the mass production possibilities don't exist as yet, as soon as the economics catch up with technology we are going to be using integrated circuits in analog applications.

Filter Design

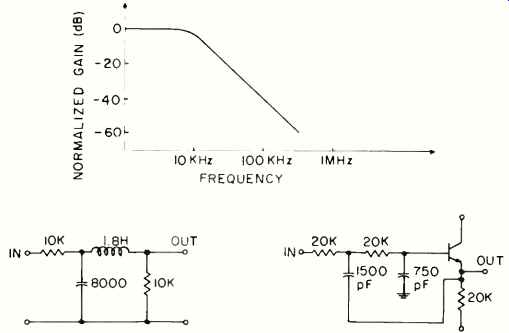

Now let us consider how we can overcome some of the problems of filter design by using active techniques. Fig. 9 shows a second-order Butterworth filter response which would normally be realized by the LC circuit on the left.

Notice that we need a 1.8-henry coil and an 8000-pF capacitor.

Fig. 9. Passive and active filters. Butterworth second-order filter response

with LC and active RC implementation.

Fig. 10. (A) The frequency response of a Chebyshev fifth-order filter.

(B) LC and (C) active RC filter network implementation.

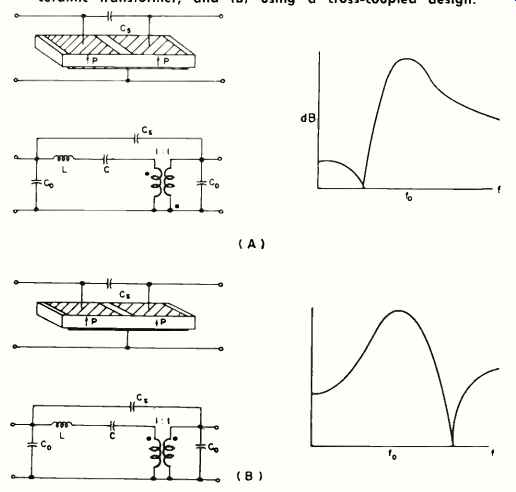

Fig. 11. The response characteristics of a double-transverse ceramic transformer.

(A) With a conventional double-transverse ceramic transformer, and (B)

using a cross-coupled design.

Shown at the right is an active circuit implementation of the same filter, designed by Gordon Danielson of G-E, wherein the inductor has been eliminated.

The filter can now be integrated, whether you do it on a monolithic silicon substrate or whether you do it with thin films and discrete transistor chips and then call it a hybrid.

Batch fabrication techniques can be used to make even more complicated filters such as the Chebyshev type shown in Fig. 10. Here we have a response with more critical specifications. Compare the active RC network with the passive LC circuit. Does the circuit look more complicated? Yes, it does. It is much more complicated, but with batch fabrication techniques, the cost may actually be lower, not higher.

We should understand that the subtleties go even further.

Even the design approach is different. We no longer ask our selves how to build a filter, but "How do you provide a filter like function? What is the function of a filter in this system? Are there other ways of providing this function?" Let me cite just one example of the kind of things we have been cooking in the laboratories.

Fig. 11 diagrams a transformer-filter combination which is made from a single bar of piezoelectric material-one of the barium titanate family. This bulk device is doing the same thing as that combination of transistors, resistors, and capacitors that we have illustrated in monolithic integrated form which, in turn, was doing the same job that the combination of discrete components-inductors, capacitors, and resistors--was doing in the days of "bliss." It may soon be possible to deposit such bulk-effect functional elements continuously with batch fabricated semiconductor net works.

We have heard, too, that variable resistors and variable capacitors are components which can never be displaced by integrated circuits. Here, again, our thinking is blocked by the fact that because variable resistors or variable capacitors have been used for a long time their use is therefore inviolate.

I think this is a wrong assumption.

We must ask ourselves, "What is the function of these variable elements?" Their functions may, for example, be assumed by phase-locked loops; in other words, feedback systems or servos that will do the tuning automatically.

The real question is, "When do such subsystems become economically competitive with present systems?" As batch fabrication evolves, such techniques will become directly competitive with present systems.

Finally, I would like to make passing reference to high power. While it is true that high-power devices appear to be untouchable from an integrated-circuit viewpoint, in the laboratories we are now asking the same questions about such power devices as we asked about other devices in the past.

We are asking, "What functions are we trying to provide and are there other ways of doing the job?" Within a year you will undoubtedly find power devices that look pretty integrated-integrated structures that have, in fact, control circuits adjacent to the power element in integrated form.

As time goes on, the integrated or batch fabrication techniques will be applied to the power field in the same way as it is being used in the low-level computer business today.

Since these predictions are being made by an R&D man, you might discount them because we have been unduly optimistic in the past. I will have to admit that in 1951-52, I was one of those who predicted that transistors would eliminate tubes because transistors had infinite life and were failure-proof. The tube manufacturers are still in business! The previous distinguished speakers (see Editor's Note) have raised the question, "Why hasn't the discrete-component manufacturer been replaced by the integrated-circuit manufacturer?" We might riposte, "Why hasn't the tube manufacturer been replaced by the transistor manufacturer?" Let's this for a moment be cause the transistor is about 15 years old, yet the tube manufacturer is still in business.

My answer would be that, in effect, the tube manufacturer has been re placed by the transistor manufacturer because if it hadn't been for transistors, the tube industry would, no doubt, be very much bigger than it is today.

Also, if it hadn't been for the transistor, several electronic industries that exist today would never have come into being. For example, the modern computer industry is based on the transistor.

This is literally true. Without the transistor, modern computers simply would not be economically feasible.

The space industry also depends on the transistor. Without it, our various space ventures might never have gotten off the ground.

Perhaps if tubes had been able to keep up, technologically, with transistors, they would have been used in computer and space application but the fact is that tube manufacturers have lost virtually all of this potential market and, as a result, have ceased to expand.

As time goes on we will see a still further decline in the percentage of tube manufacturers when compared with the total components industry.

We might ask, therefore, "How far wrong were we in our original prediction?"